#Introduction

The internet, dominated by the one and only web, has enjoyed a very comfortable and constant boom over the last couple of decades, with every single year opening doors to new trends, new stars, new means of socializing with others, and just being there, permanently connected. Sites like Facebook have risen from the ashes of giants like MySpace in this incessant turf war, while others, like Reddit, have found their own niche in this world.

However, due to its violent surge towards the top of modern human society, it was urged to develop in a manner that was never before envisioned, in a process very similar to force-feeding. The web looks today like a really fat, mutated monster with countless patches applied, only to be able to keep its guts on the inside. Yes, the web is now this young, thoughtless giant carrying all of us on its back while we’re tirelessly fixing it in hopes of reaching the next checkpoint.

You may argue that the view on the whole situation is pointlessly gloomy, as the web has a lot of great tricks up its sleeve and more than enough room to grow, and I agree, its merits and potential are definitely there, but bear with me for the next few minutes as I will try to bring up why I think the web is terribly broken, and why it needs to be repaired before adding any more patching.

#Users vs. web developers

Like in any other trial, there are two parties. On one hand, there are the web developers actively and collaboratively knitting the outer edges of the web. On the other, there are the users who are crawling it. Being part of a jury that goes by the name of “common sense”, I’d go so far as to call both parties equally guilty. The users, because of their insatiable thirst for having something new to devour and their lack of patience in order to do so, and the web developers, because they blindingly submit to these requests by constantly stacking layer upon layer of patches on top of our old friend.

But enough with the charges. This problem affects everyone. Irrespective of your being a user or a developer, the lazy state of the web impacts you. As a user, pages load slowly, browsers drain your battery like a leaky bathtub drains water, and sometimes just the simple act of scrolling through a web page is as smooth an activity as the task of sawing wood because of all the JavaScript running in the background. As a developer, however, you need to learn countless programming languages and tools, you have to waste most of your time on finding workarounds and fixing other people’s code, you get to write boilerplate code in nigh exclusivity, and the list can go on and on.

#Contents

- A Brief History of Patching

- World Wide Blunders

- The Dawn of a New Age

- Working towards Viable Solutions

- Conclusion?

#A Brief History of Patching

As you all know, the web was created as a network where you could easily access static pages (text and images) without having to download every single document and then having to open it manually. It was pure HTML magic back then, but this magic didn’t last for too long. People found these pages rigid, so HTML started to grow and lose its standards. A solution was needed, and it came in the form of the Cascading Style Sheets. A year later, people added scripting capabilities to the browser with something that was going to be ultimately called JavaScript. Due to lack of performance and sheer necessity, Flash came out, with HTML5 following close behind. Countless other plugins and technologies were added on top of that, but, in the interest of time, I will leave it at this.

#World Wide Blunders

#The Technologies

HTTP

The first version of the hypertext transfer protocol was used to transfer text, not images, not binary–just text. We’re now using it to transfer all kinds of data, including binary. Nonetheless, text is still the choice for the headers of the requests and responses. Do they really need to be humanly readable? This human readability costs a lot. Instead of sending a couple of bytes, we’re sending more than a dozen. Wouldn’t it be great if you could just compare a few bytes instead of laboriously parsing a response? The fact that we have plugins that do this for us doesn’t make it right.

HTML

As was previously stated, HTML pages had a very slow start. The first version could only display formatted text and links. The whole page was just a pile of static tags patiently awaiting their rendering. Naturally, people added so much more to the poor old web page that it now behaves more as a very complicated app than a static resource. The problem with all this is that every single one of these additions was designed as a separate language layer on top of HTML. This meant that instead of extending the functionality of a tool already in use, we created other tools that HTML works in conjunction with, and developers need to learn and keep track of.

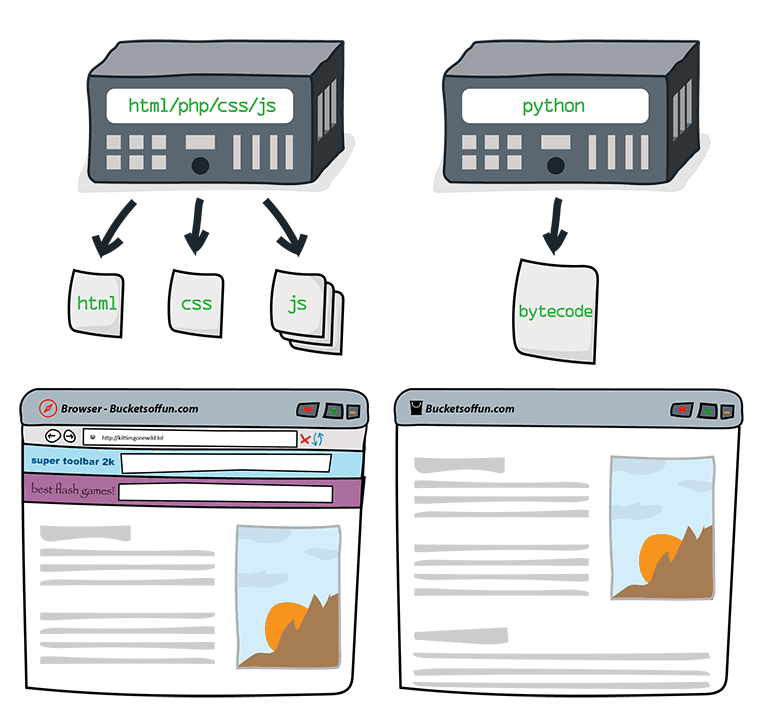

People tend to take things as they are and use them without questioning too much their actual effectiveness. After all, when something is created, the developers will think about all the plausible use cases, right? The short answer to the question is “not always”. Sometimes we make decisions based on what is needed and sensible in that respective moment, other times we have to rush and ship on time without any further ado. It so happens that not many people ask themselves why HTML, the language that the browser uses, as opposed to people, has to be humanly-readable. Instead of having a cumbersome parser that the browser uses in order to make sense of the whole page, we could use machine-friendly bytecode that’s faster, less resource-hungry, and probably at least tenfold smaller. A developer will have the code that gets compiled into the bytecode anyway, so why would he need to have a humanly-readable version inside of the browser as well?

CSS

The greatest problem of CSS is that it exists outside of HTML. If HTML is supposed to be the structure of a web page, why do we have to pack its styling separately? Is it really intuitive to design a downright ugly version of the page in HTML that looks nothing like the ultimate thing and then go to the CSS side of things and change it? After doing that, a frontend developer has to commute between HTML and CSS until everything works perfectly.

I’m in a position where I have to ask the question why CSS and HTML don’t follow similar approaches to some of the best native UI toolkits like Qt or Android. Their structure and styling are not separated, their tags are not ambiguously called div, p, or tr, and designers can use actual tools to flesh out their designs instead of having to write CSS and HTML like every other developer.

I won’t delve into the nitty-gritties of why CSS is missing out on features, but I will mention a few like inheritance, variables, and nesting, all of which are available in Sass. However, this is not a solution. It would be if it was the standard and everybody was using it, but, since it’s not, the web still suffers from the aforementioned problems. Learning CSS before Sass is like learning Java bytecode before Java, but people still do it because it’s not the standard.

I will end this section by adding something that’s not really the fault of CSS but definitely makes web development faulty, and that’s the inconsistency between browsers. This issue is so disgusting and explicit that there’s simply nothing else to add.

JavaScript

If there’s one thing that’s particularly bad about the web and drags it down like a wrecking ball tied to it’s ankles, it’s JavaScript. This language has been particularly cruel to developers and browsers since its first days under the sun, driving people mad, and slowing down websites. There are a lot of pressing issues concerning JavaScript, like its dominance, its standardization and lack thereof, but I will begin by talking about its design flaws as a programming language.

One of the most annoying issues people have when writing JavaScript is the automatic type conversion. Instead of being as natural and logic as possible, it’s unintuitive, and it’s trying to solve inexistent faults by introducing bigger ones that are more than noticeable. To begin with, feast your eyes on this:

var crazy = "1";

crazy + 1; // -> "11"

crazy - 1; // -> 0

crazy++; // -> 2

crazy = "1";

crazy += 1; // -> "11"Right here we have a fabulous case of analogous operators working in totally different ways which is pointlessly counterintuitive. Addition is overridden by string concatenation, but subtraction is not, because there is no logic for it to be overridden by. Here’s another one that I absolutely adore:

0 == "0" // -> true

0 == "" // -> true

// up to this point you may argue that it's not quite intuitive,

// but it could make sense in some remote cases

"0" == "" // -> falseThis is simply mind-blowing. Mind-blowingly JavaScript if you ask me. This one defies the simple and ubiquitous law of equality by making the method “smarter” and stripping away its transitivity. The point is that no one should sacrifice sane logic when designing a language just to make automatic conversions possible.

JavaScript also implements every numeric in terms of the IEEE754 double precision standard which means that you cannot have the same precision you would expect from usual integers. This is why it’s a bad idea to use doubles everywhere:

10000000000000000 == 9999999999999999; // -> trueComparisons are actually so broken in JavaScript that people take advantage of its automatic type conversion in order to do type specific comparison:

"" + left == "" + right; // compares strings

+left == +right; // compares numeric values

!left == !right; // compares booleansThe type system in JavaScript is broken to such an extent that this is possible. Not convinced? Try some of the outputted code in a console.

But enough with the design choices of the language. Yes, implicit global variables, an unintuitive type system, unpredictable behavior of this for newcomers, lack of block scoping, and an unprecedented mix of paradigms, all contribute to its frustration-causing nature for people who are trying to get a hang of the language, but the rest, the people who actually know what they’re doing, can be pretty productive using it.

There’s a reason why JavaScript is so popular, and that reason is not productivity, robustness, practicality, or anything of the sort. The reason it’s so popular is because it’s the only choice. When writing a web server nowadays, you get consistently more options besides PHP, but when it comes to client-side functionality, JavaScript is the only way, very much like the majority of today’s PCs only run x86 instructions. The difference is that JavaScript is actually a language, a human-friendly abstraction of what the CPU understands. Nevertheless, browsers and users are treating it like machine code.

This is how CoffeeScript was born. An expressive language that makes JavaScript bearable, complete with object orientation and a smart functional style, which is unfortunately compiled to the same old JavaScript just because browsers chose not to implement it. This is why, like Sass, I don’t see it as a viable solution: no matter how good CoffeeScript is, people still don’t see a future standard in it.

The same thing happened with jQuery. This gigantic plugin is used by over 60% of the top 10,000 most popular web pages, and it still doesn’t come with JavaScript by default. Yes, you can add it as a plugin, but why does this clutter have to be there? Someone who starts learning JavaScript with jQuery from the beginning will find this disrupting, and the line between JavaScript and jQuery will never be grasped.

To conclude this awfully long chatter about JavaScript, I will hit this final nail into its coffin: People should be able to write client-side code in any language they want which won’t be choking the browser’s neck. And between us and this fabulous goal sits none other than JavaScript’s undeniable popularity.

PHP

For a very long time, PHP was the go-to language of web servers. If you needed your pages to display dynamic content and maybe even receive data from the users, PHP was your only solution. Nowadays you can choose from a myriad of programming languages and frameworks to help you get your server going, but the old templating language is still what most people choose when they want to have full control or just design a simple website; PHP is the yardstick for backends, and this sucks, because PHP is a flawed language.

And I’m not the only one saying it. Here’s a neat analogy:

I can’t even say what’s wrong with PHP, because— okay. Imagine you have uh, a toolbox. A set of tools. Looks okay, standard stuff in there.

You pull out a screwdriver, and you see it’s one of those weird tri-headed things. Okay, well, that’s not very useful to you, but you guess it comes in handy sometimes.

You pull out the hammer, but to your dismay, it has the claw part on both sides. Still serviceable though, I mean, you can hit nails with the middle of the head holding it sideways.

You pull out the pliers, but they don’t have those serrated surfaces; it’s flat and smooth. That’s less useful, but it still turns bolts well enough, so whatever.

And on you go. Everything in the box is kind of weird and quirky, but maybe not enough to make it completely worthless. And there’s no clear problem with the set as a whole; it still has all the tools.

Now imagine you meet millions of carpenters using this toolbox who tell you “well hey what’s the problem with these tools? They’re all I’ve ever used and they work fine!” And the carpenters show you the houses they’ve built, where every room is a pentagon and the roof is upside-down. And you knock on the front door and it just collapses inwards and they all yell at you for breaking their door.

That’s what’s wrong with PHP.

Same guy has one of the most complete articles about the bad design of PHP where you can also find the above quote. I highly recommend you to read it here.

Now, for the people who already TL;DRed the exceptional article, I will provide a few necessary examples from the core to back my point.

The same weakly typing from JavaScript has a comeback in PHP with leaner, meaner conversions.

99 == "99heya"; // -> true

"1e5" == "100000"; // -> true

"TRUE" == TRUE; // -> true

"TRUE" == 0; // -> true

TRUE == 0; // -> falseThe designers of PHP also thought it might be a good idea to use the . operator for concatenation. Most OO languages use this for accessing attributes and methods, but in PHP you have to use two characters instead of one, namely ->, forcing you to triple the amount of keys pressed.

And this would actually be problematic if PHP was an object oriented language, but it’s not. Most of the language works in a similar way to C, where you pass arguments to conservative, mutability-endorsing functions that change the state of objects. This would be acceptable if the language didn’t have dynamic calls or OO capabilities, which it does. So if you do have a set of working principles which are there to make the language easier to use and which sacrificed performance to make this possible, why not actually use them everywhere in the language? Mixing them up is worse than not having them at all.

And the inconsistency doesn’t stop here. PHP doesn’t know when it needs to use underscores–sometimes it does, sometimes it doesn’t. PHP cannot get it straight when it comes to the position of its C-like array manipulation functions either, as the position of the actual array varies greatly.

Yes, PHP does have a website dedicated to repairing its poor design and it vastly exceeds what was brought forth here.

Fortunately, there are a lot of alternatives to PHP today, so there’s not much reason to complain about it. The only remaining issue is its de facto association with web servers.

SQL

I want to start off by saying that there’s a reason people created object-relational mappings between database objects and usual objects in the first place–because they didn’t want to handle SQL anymore. SQL is just another language that you have to remember, another layer of abstraction that you have to manage and keep track of.

Of course, you may need to have better control of your database that an ORM does not offer, but this doesn’t mean that you have to leave the confort of your programming language or your programming habits to do so. If there’s a language that you can use for backend development, it should be able to control the database just as delicately as SQL does.

#The General Issues

Human Readability

Most of the stuff the web is made of is humanly readable. This means that the poor computers have to parse all of this data to make everything edible. Similarly to how our bodies have a hard time digesting unfamiliar compounds because they have to break complex bonds, computers have to translate long words into simple bits that are faster for it to use.

Still, there is little use to it. Does an end user need to see the source code of the web page? Does the header of a request have to be humanly readable? Apps on phones don’t share their source code with every single user and look how popular they are.

Using binary formats and compiling everything to bytecode would help a lot. Websites would be much smaller in size and faster to load, pages would be more responsive and battery efficient, and all of this would be platform-independent and language independent, in a similar fashion to how the JVM can run Java, Python, Ruby, Scala, and more on Linux, Windows, and OSX.

Polyglotism

Let’s say you want to build a relatively meagre website. You start by creating a static page in HTML. Everything is fine and dandy until you realize that you want to add a date to the page so you add in PHP and create a few more pages. You want your users to have accounts which means including a database and querying it with MySQL. Simple HTML doesn’t cut it anymore because it’s ugly so you add some style with CSS. A few forms and buttons later and you find yourself writing more JavaScript than anything else. To build a very simple website you had to use no more, no less than five different programming languages. Now you may argue that they’re not so hard or different and that the overhead is small, but try telling this to someone who’s absolutely new to web development.

You shouldn’t be a polyglot to be a web developer. What you should be able to do is to choose a programming language of your liking and use that for the whole process. Every language should have all the important standard libraries for web development and should offer the same capabilities. When choosing the language, the only aspect you should take into consideration would be how familiar your are with it and how much you actually like. Arguments like “I’m not using X because it doesn’t have a good library for…” or “I don’t like Y, but I’m using it because this framework is so much easier to use.” should become obsolete.

Moreover, a solution with universal bytecode across platforms would allow the development of one single language-independent plugin which would eliminate the problem of inconsistency and which would be very easy to develop.

Workarounds and Time Investments

I remember when I first tried web development many years ago. I was trying to accomplish a very simple task that had no obvious solution, so I asked a question. The answer that I got was staggering. People had agreed that the best solution to this was a workaround. This little incident changed my view on web development which I now see as a sandwich of patches that’s so thick that it barely holds itself together, even though it’s tied with strings on its side to prevent this from happening.

The problem with workarounds is that although they offer fast solutions, they will definitely cost more time in long run. Most companies enjoy a quick buck, but they will consider a long term solution if it brings more profit in the end.

Right now, a lot of time is invested in solving the problems of the web by patching the old stuff. If we could add up all the man hours used for all these patches and invest them in a fresh and clean design, we’d end up with a lot of spare man hours. Some of these patches began from a low level, but they still built upon the same faulty foundation.

What I’m trying to emphasize is not the futility of such patch-creating jobs, but the fact that these are not solutions for the greater good of developers or users. People should find new and exciting problems to solve, problems that have impact like finding a solution to antimicrobial resistance. I don’t see how anyone’s purpose can be to hide the dust under a pretty new rug.

#Where we’re at

Frameworks

People thought it would be a great idea to add a layer of abstraction on top of the web server and database, and it did indeed make things much easier. By using a web framework like Rails you can set up a simple blog from zero to working in only a few hours without having to put up with the kind of misery that only PHP can cause. This was supposed to be the revolution of the web, and it was a revolution, for better or worse, just not the kind of revolution a lot of us were expecting.

These frameworks rely on the idea that most of the people would want to achieve some specific goals which it makes sure are easy to implement. Unfortunately, if you want to stray from the beaten path, you’re bound to hit a dead end sooner or later. Because of its eagerness to overperform, the framework makes too many decisions on your behalf with the idea not to overwhelm you with countless options. Now, imagine a method that calls a lot of other methods and you want to make a change somewhere deep down the stack. If the framework doesn’t provide an easy-to-use handle, you will have a lot of overriding to do. Unless you can find a plugin that does exactly that, that is.

Another problem that a lot of these frameworks have is lack of good documentation. If you’re used to the kind of documentation a programming language like Ruby or Java can offer, expect to be disappointed. Most of these frameworks offer a getting-things-done kind of documentation. It’s easy to figure out how to implement scenarios that are already documented and quite difficult to figure out how to integrate your own implementation in the framework. Yes, a lot of times it’s just easier to write the changes that you need than to integrate them into the framework.

All in all, web frameworks are a great idea, but they’re still built on top of the same questionable stack, with kind of the same workaround-oriented mentality. The good thing is that they’re definitely on the right track.

Node.js

The honest and honorable idea of Node.js is to offer a good server-side platform as an alternative to PHP. It’s fast, and it offers event-driven, non-blocking I/O. Apart from this, you can use the same programming language–sucky as it may be–for both the client and the server.

One thing that I have against it is that it pulls the whole V8 runtime from Chrome to run on. The runtime was designed to run inside of browsers, and now we’re pulling the whole thing out of the browser to run our server side code. It’s almost like asking the browser to run the server only for the commodity of using JavaScript. I’m not trying to point out how this was a bad decision, because it was most probably the best decision for such a project. The problem is that it continues the workaround trend.

#The Dawn of a New Age

#On the Web

Dart

This is Google’s solution to the JavaScript problem: a fast virtual machine that can run a new, Java-like language that’s concise and easy to write. Running Dart in its VM is faster than the JavaScript counterpart on V8, but not by a long shot. It also has a ton libraries which make web development a lot easier.

Its harmatia is that only Chrome can run it natively. Google provides a Dart to JavaScript compiler, but this is obviously not a long-term solution which leaves Dart pray only for the enthusiast market.

NaCl

A spiritual successor of ActiveX, Native Client is a way to run native code within the browser on the client’s CPU. It differs from its forefather by sandboxing every process.

Native Client runs an architecture-agnostic subtype of the LLVM bytecode. This means that you can compile your favorite programming language to this bytecode and run it instead of JavaScript.

Nevertheless, many of the browser companies advocated against the idea of hiding the source code of the web, refusing to implement NaCl which leaves it in a Dart-like state.

#Everywhere

Atom & Brackets

Both Atom and Brackets seek to provide open approaches to editors, with clean plugins, a central repository, and easy extension with the tools of the web. Compare this to Vim or Emacs and you will start to notice a much tidier package that’s easier to set up, with plugins that are more compatible while not giving up on the idea of free software.

But this is where the niceties end. Both of them need to boot up the V8 runtime in order to run editor. Both of them are painfully slow compared to any modded Vim or Emacs. Both feel like faster browsers that you can use to edit files which is not necessarily a good thing.

OSs on the Web

Operating systems have pretty fast frontends, using either native executables or low-level VMs to provide the user with a fluid experience. Mozilla came up with the idea of making every app on it’s new OS a web app, as if the web wasn’t present enough in absolutely every corner.

The question is this: Is web development such a better experience in comparison to the classical UI toolkits that we actually need to build a whole OS around it? Because it’s definitely not a question of improving performance.

Imagine a future where every app lags and where you need to use three different languages to write one–of which one is the almighty JavaScript. There must be a reason why iOS and Android didn’t start with a similar philosophy, mustn’t there?

#Working towards Viable Solutions

#Applying basic Computer Science Values

Most of the recurring core issues of the web have plagued computers long before its advent. Code duplication, workarounds, toxic environments, entangled dependencies, and designing software without performance in mind have all troubled the minds of computer scientists for decades, and some solutions have been found. Most of them come in the form of principles and mindsets which, when in use, lead to much better end results.

One of these values is code reuse. Since a lot of the tools and plugins used for the web are now open source, code reuse should be a no-brainer. And it is, but a lot of people find themselves writing the same plugin that someone else has written and the same form-related boilerplate code. This happens mostly because people cannot concur, and this is a great thing. It’s just that code should be written in a way that makes it easy to reuse on other platforms and in other cases. An open source virtual machine created for the web would solve this problem wondrously. Wink.

There’s also the principle that software that just works isn’t good enough. Yes, I’m looking at you, software quality. In an industry as huge and abundant as the web, where so many people work, and so much money is thrown around, I’m pretty sure that the point where quality makes the difference has been reached. Mindsets, products, and code that affect the web can learn a thing or two from the solid, future-proof visions of respectable companies and the impeccable craftsmanship that you can find in a lot of open source projects.

One last thing that I want to mention is what software engineers call premature optimization. The reason I’m mentioning it is because the web tends to overutilize it. Virtually everything that was created for the web before the 2000s was created with nothing else in mind apart from the need of having something functional, attitude which more or less led to the current performance-lacking state of the web. People who are working in this domain should try to strike the crucial balance between delivering on time, having quality code, and making sure the performance will be satisfactory even if sites get thrice as big.

#Immediate Solutions

I see two things that could help the web a lot without requiring anyone to cast the Ring of Power into the fires of Mount Doom: a standardized and modern UI API, like any decent OS has, and a means of executing any other programming languages on the client side. Both of them are not so far-fetched and pretty easy to put in practice, even on top of the current technology stack.

First up is the UI API. The key aspect of it should be future proofing. It’s more than obvious that due to the popularity of the web, people will use it everywhere they can, so this API should be modern, extensible, easy to use and learn. There should also be a way of translating these calls to HTML, CSS, and JavaScript to make it play nicely with the current flock of web browsers.

For the second issue, the simplest way would be to integrate a virtual machine that executes general bytecode in a secure sandbox. I’m pretty sure that a NaCl-inspired LLVM implementation would do just fine for everyone trying to rid themselves of having to write so much JavaScript.

#The Long Term Vision

A much more ambitious goal for the whole web would be to get rid of browsers. Their current role is to run the web pages, to handle more of them at once, and to present you with a simple, slick UI for you to change between these pages. This is something that an operating system is built for: running multiple processes at once and having a simple UI that helps you commute between them. The obvious question is now: Why not integrate the technologies behind the web into the operating system and let each web page run as a different window?

People would have the ability to give the operating system the address of the web page which opens up as a simple window with only the web page inside. There should be no differentiation between native apps and web apps apart from their design and functionality, which should be restricted for the apps coming from the web.

#Conclusion?

The current state of the web leans towards the deplorable, but at the same time it’s still doing its job. What this article tries to point out is that it can be so much better. From ever-changing, ever-patched, laggy and totalitarian in its ideas, it can become that clean, fast, and enjoyable experience we all seek.

The web has some of the best design I’ve seen in years. People are writing newspaper quality editorials on blogs. The amount and quality of the services and things you can buy online is the best, bar none. So, why should this stylish free medium of expression rely on such old and stubborn ideals as it does now? Why does this experience have to be spoiled by technological problems that have been solved many years ago?

The web needs to change for the better, and if there’s a place where its revolution can start, it’s on the web itself.

UPDATE:

It seems like Apple, Google, Microsoft, and Mozilla are working on a standardized bytecode for the web called WebAssembly. Here’s the link to the original post on ArsTechnica.